*

This post has been updated on 27 June, UK time, an earlier version of same story is below

Yesterday’s games at the World Cup mean the World Cup group games (48 of 48) have been completed. There have been expected victories for some nations, big upsets for others – Adios Spain! Bye-bye England! – and more goals than most fans would have expected. So who could have forecast this? Actually, a huge variety of ‘experts’, forecasters, theorists, modelers and systems have tried to predict the outcome of this tournament, from Goldman Sachs to boffin statistical organisations. In his latest post for Sportingintelligence, and as part of an ongoing evaluation of rates of success (click HERE for Part 1 and background and click HERE for Part 2) Roger Pielke Jr sorts the best from the rest.

Follow Roger on Twitter: @RogerPielkeJR and on his blog

.

By Roger Pielke Jr.

27 June 2014

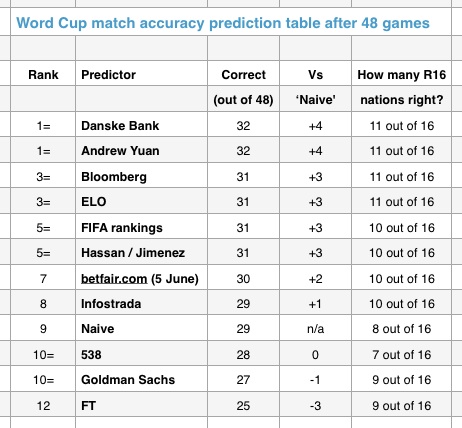

The group stage is over, and after 48 matches we can declare a winner in the first part of the World Cup prediction evaluation exercise. We made a ‘naive’ prediction ourselves based on the financial value of the squads; and we’re comparing this to 11 other predictions made by parties ranging from bankers and bookies to boffins and FIFA rankings.

Congratulations to Danske Bank and Andrew Yuan who take joint first, each picking 32 matches correctly and 11 of the 16 teams which advanced. The Elo ratings and Bloomberg also picked 11 of the 16 teams to advance but fell one short overall, picking 31 matches.

Article continues below

The FIFA rankings fall fifth despite having only one match picked differently than Yuan, illustrating the fine edge to predictive success. Hassan and Jimenez tied the FIFA rankings, despite producing their forecast last February. The pre-group stage odds from Betfair.com are next, followed closely by Infostrada.

Each of the seven methods discussed so far showed skill in that they outperformed the naïve baseline based on the estimated transfer market value of each of the teams. Still, the naïve baseline was just four games out of first, but only anticipated eight of the 16 teams moving on. That is the same number of teams to advance in Brazil who also advanced in 2010 in South Africa, which could have been used as another naïve baseline.

Three predictions win the “why bother?” award by under-performing the naïve baseline – 538, which only picked seven of the advancing squads, Goldman Sachs and the FT. The latter was included in the evaluation despite not being proposed as a forecasting tool. The other two don’t have that excuse for their underperformance.

The main lesson that I’d suggest taking from the exercise thus far is that it is very difficult to generate predictions that can outperform a fairly simple baseline approach. It is even more difficult to outperform the existing ratings systems of FIFA and Elo. All 10 methods were just one match away from underperforming the FIFA rankings. Ultimately, most of these prediction methods are consequently of certain entertainment value, but uncertain value in their prognostications.

Of course separating luck from skill is not possible in such an exercise. The strong performance of ranking systems in 2014 was in part due to the low number of upsets (7 vs. 14 in 2010) and draws (9 vs. 14 in 2010).

Consider that in 2006 and 2010 the FIFA rankings would have correctly predicted 26 and 20 matches (of 48) respectively in the group stages (this data sent courtesy @roddycampbell).

So was Danske Bank lucky and Goldman Sachs unlucky? Or was the former actually a more skilled forecaster? These are all good questions for the pub as the data do not provide answers.

We are now in a position to set the stage for part 2 of the prediction evaluation. Before I describe how I have chosen to evaluate the matches for this phase of the contest, let me remind you that there are many different ways to structure such an evaluation. I don’t think that there is any single best way, however it is important to be clear about procedure before evaluating. You don’t want to find yourself setting up the rules for evaluating a prediction after the fact, especially if you are one offering predictions.

* I use each method’s overall ranking of the teams presented before the tournament began. Several forecasters are providing updated predictions as the tournament unfolds, and the betting odds obviously change.

* If no such ranking was provided I use instead the ranked probability to advance from the group stage.

* As before, I convert probabilistic predictions into deterministic forecasts. There are obviously no draws in the knockout stage.

* I will generate a prediction for each method for each match. In other words, there will be at total of 15 matches predicted by each method over the knockout stage, regardless how they do in each round.

* At the end of the tournament I will provide a ranking for predictions in the knock-out stage as well as an overall ranking based on both the group stage and the knock-out stage predictions.

For the upcoming round of 16 matches, every method is in agreement on six of the matches, with the favorites as unanimous selections: Brazil, Colombia, France, Germany, Greece and the Netherlands. A majority favour Colombia and Belgium, but Uruguay and the USA get a few nods.

.

24 June 2014

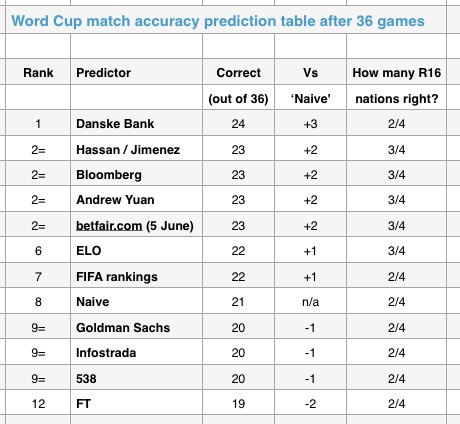

We are fast approaching the end of the group stages, and the battle for the top of the prediction league table is tight. Five approaches within one game of the lead after 36 of the 48 matches have been played.

For detailed explanation on the predictors, follow the links above, but to summarise, we made a ‘naive’ prediction ourselves based on the financial value of the squads; and we’re comparing this to 11 other predictions made by parties ranging from bankers and bookies to boffins and FIFA rankings. Those Fifa rankings have held sway … until now.

Sitting alone at the top is Danske Bank, which has the most games picked correctly overall. Among the leaders at the halfway point were the FIFA rankings and Andrew Yuan, who I noted had 47 out of 48 matches in common.

Here is the table after 36 games.

Article continues below

Yuan took that one match that they split (Mexico-Croatia) ensuring that the FIFA Rankings cannot finish first. The Naive Baseline has had a good run, passing up four of the methods, and now trails the two rankings, FIFA and Elo, by just one game.

I’ve added an additional method of ranking the predictions, according to the number of countries picked to advance from the group stage. All methods have already slipped from perfection, with only five approaches correctly picking 3 of the 4 teams so far to advance. The others, including Danske Bank at the top of the table, only have 2 of the 4. It just goes to show that prediction evaluation is highly sensitive to the metrics of assessment that are used.

Looking ahead, all methods have picked France, Argentina, Germany, Belgium and Russia to advance. But no method has picked Costa Rica, and only two have the USA. On Friday I’ll provide a summary of the group stage of the competition and set the table for the knockout stage.

.

Roger Pielke Jr. is a professor of environmental studies at the University of Colorado, where he also directs its Center for Science and technology Policy Research. He studies, teaches and writes about science, innovation, politics and sports. He has written for The New York Times, The Guardian,FiveThirtyEight, and The Wall Street Journal among many other places. He is thrilled to join Sportingintelligence as a regular contributor. Follow Roger on Twitter: @RogerPielkeJR and on his blog

.

More on this site mentioning the World Cup

Follow SPORTINGINTELLIGENCE on Twitter